An exhibition investigating the potential of generative design.

CHALLENGE

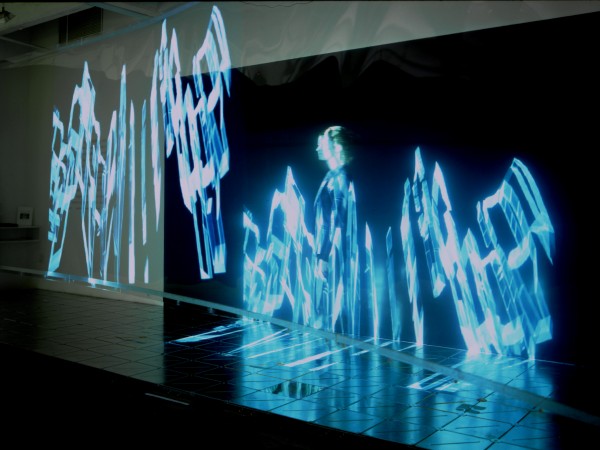

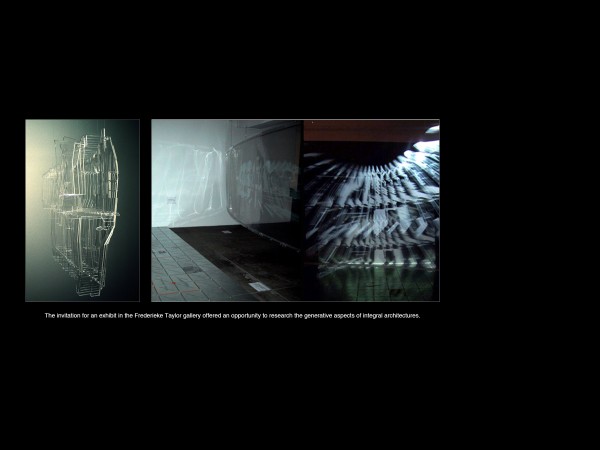

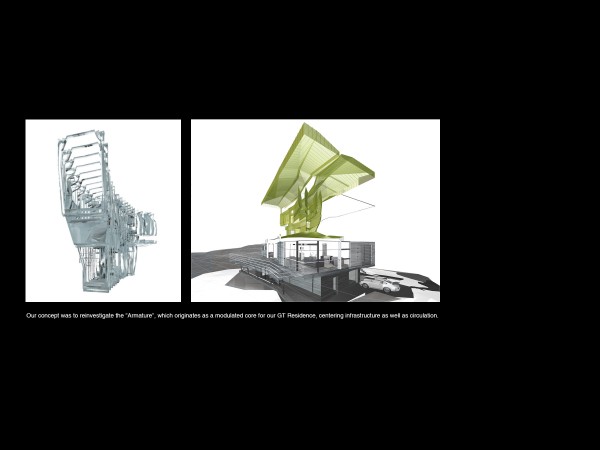

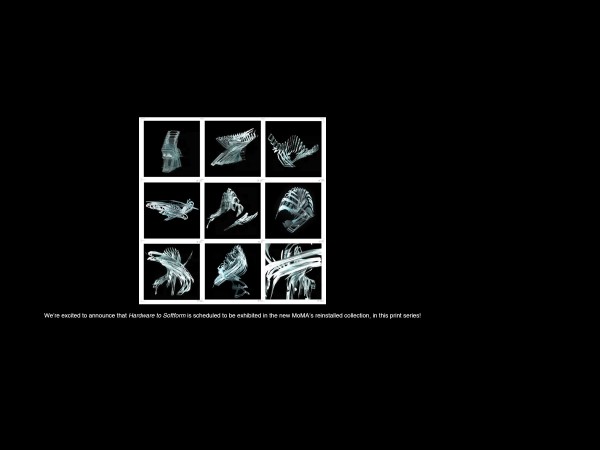

“From HardWare to SoftForm”, is a 3-D digital interactive installation of the ‘Armature.’ It has been exhibited in Frederieke Taylor Gallery in Chelsea, NYC (September 2002) in the ‘Art & Idea’ Gallery, Mexico City (September 2004) and in Monterrey (November 2004), as well as part of MoMA’s permanent collection and currently on view in the MoMA’s new extension (November 2019). For the project, we investigated the potential of ‘generative design;’ a computational design process that arrives at a final form through a series of algorithmically created ‘generations’. Akin to evolution in the biological world, each generation has random mutations that either enhance or detract from the design’s fitness for purpose, and are either carried into future generations or filtered out.

INNOVATION

We revisited the “Armature;” an idea we first developed for the infrastructural core at Gypsy Trail Residence. Based on its performance and distilled in its precise configuration and operation, The Armature creates different environments around and adjacent to itself. We asked in this work: “Given the consequential mutation of the real object and the environment it creates: could the object become the environment?”

IMPACT

We developed three ways of modulating the relationship between exhibition-goers and the object-environment.

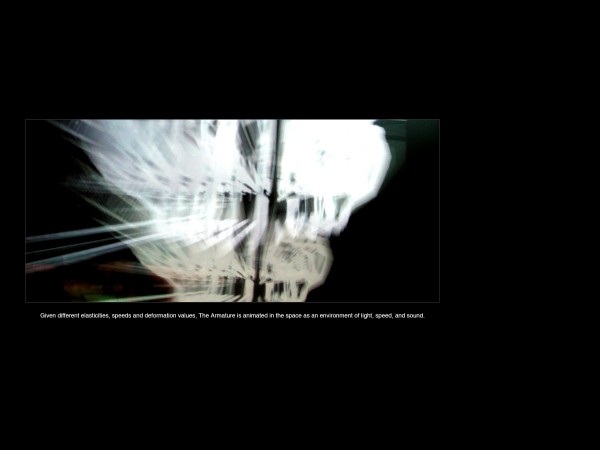

Slicing: animation software programmed different levels of elasticities, speeds and deformation values. The model had a built-in memory; the object always re-configures and when ‘triggered’ the mutation would resume. The process of animation in itself is ‘objective,’ the variation in repetition is not subjective or hierarchical. Data derive its meaning, time its form.

Animating: triggers distributed in floor fields activate the projected construct as a dissection of an organic unit that expands, contracts, and envelops. This interaction challenges the relationship of the viewer and the object, constantly re-investigating its ‘object-ness’. Sound technology further enhances the affect: localized hypersonic sound beams developed by Robotics International scramble sound until the sound waves hit a surface, thus creating a 3D sound space.

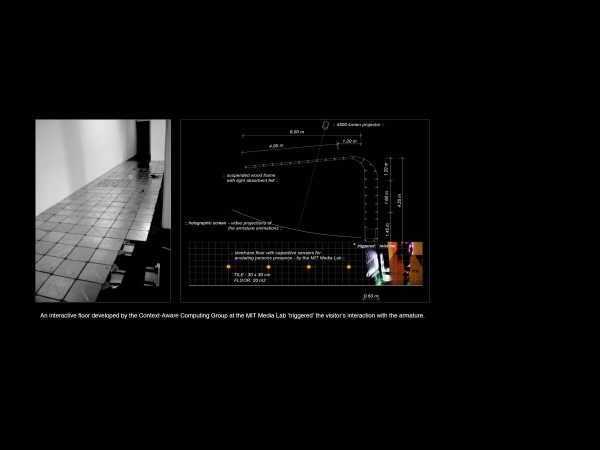

Installation: the “interactive floor” developed by the Context-Aware Computing Group at the MIT Media Lab became integral part of the gallery installation and enhanced or ‘triggered’ the visitor’s interaction with the armature. Once triggered, the sensor data are fed back to the computer, which launch holographic animations and digital environments. A special prototype of the modular steel floor analyzes changes in the capacitance of the interactive floor due to the compression of the foam dielectric.

“From HardWare to SoftForm", is a 3-D digital interactive installation of the 'Armature' exhibited in the Frederieke Taylor Gallery in Chelsea, NYC [September 2002], in the ‘Art & Idea’ Gallery, Mexico City [September 2004] and in Monterrey [Nov 2004], as well as on view in the MoMA's new extension, 2019!

HW&SF WAS SUPPORTED BY

The Netherlands Foundation for Visual Arts, Design and Architecture, Amsterdam, The Consulate General of The Netherlands in New York, Steelcase NYC, and the Context-Aware Computing Group at the MIT MediaLab, Cambridge.